I met boatloads of physicists as a master’s student at the Perimeter Institute for Theoretical Physics in Waterloo, Canada. Researchers pass through Perimeter like diplomats through my current neighborhood—the Washington, DC area—except that Perimeter’s visitors speak math instead of legalese and hardly any of them wear ties. But Nilanjana Datta, a mathematician at the University of Cambridge, stood out. She was one of the sharpest, most on-the-ball thinkers I’d ever encountered. Also, she presented two academic talks in a little black dress.

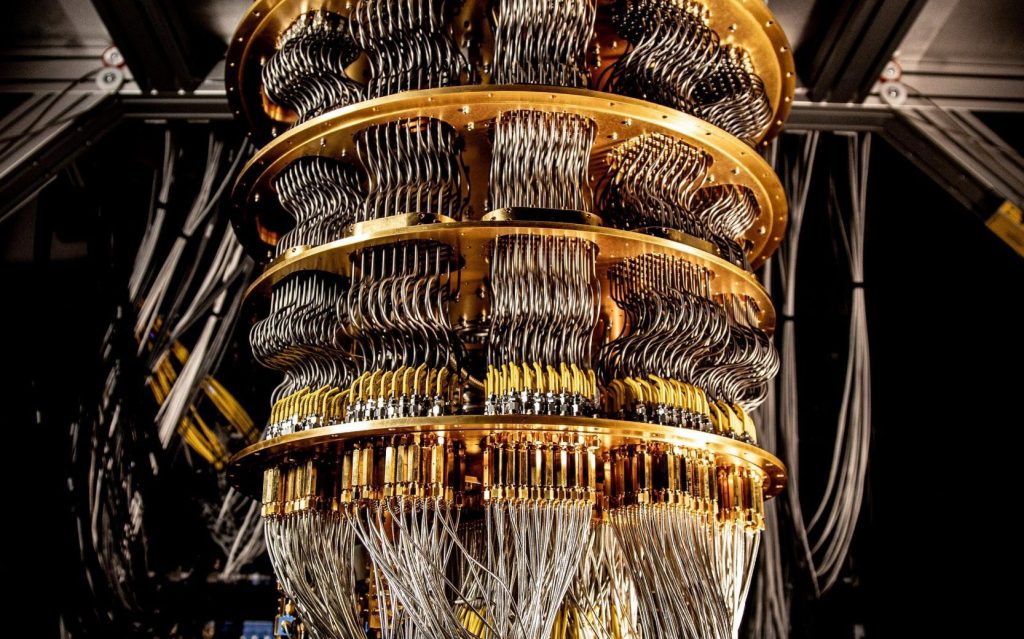

The academic year had nearly ended, and I was undertaking research at the intersection of thermodynamics and quantum information theory for the first time. My mentors and I were applying a mathematical toolkit then in vogue, thanks to Nilanjana and colleagues of hers: one-shot quantum information theory. To explain one-shot information theory, I should review ordinary information theory. Information theory is the study of how efficiently we can perform information-processing tasks, such as sending messages over a channel.

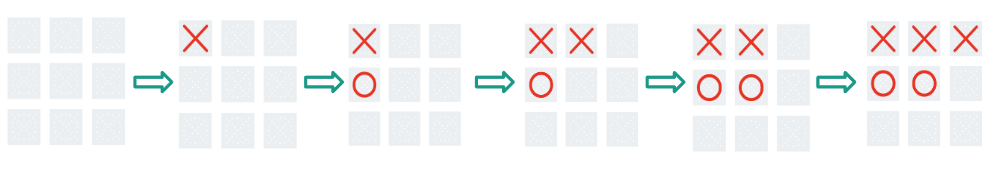

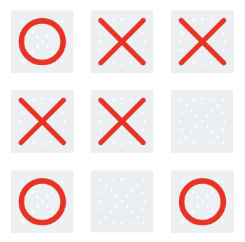

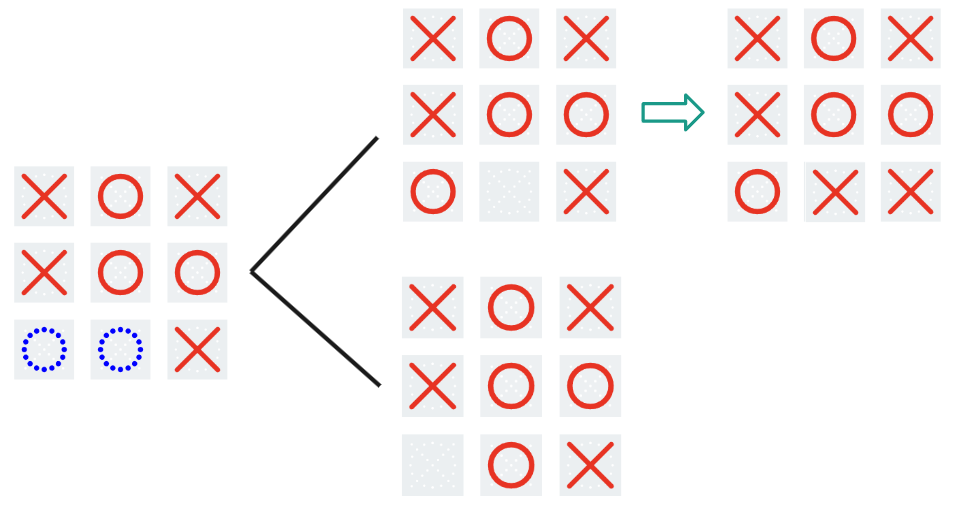

Say I want to send you copies of a message. Into how few bits (units of information) can I compress the

copies? First, suppose that the message is classical, such that a telephone could convey it. The average number of bits needed per copy equals the message’s Shannon entropy, a measure of your uncertainty about which message I’m sending. Now, suppose that the message is quantum. The average number of quantum bits needed per copy is the von Neumann entropy, now a measure of your uncertainty. At least, the answer is the Shannon or von Neumann entropy in the limit as

approaches infinity. This limit appears disconnected from reality, as the universe seems not to contain an infinite amount of anything, let alone telephone messages. Yet the limit simplifies the mathematics involved and approximates some real-world problems.

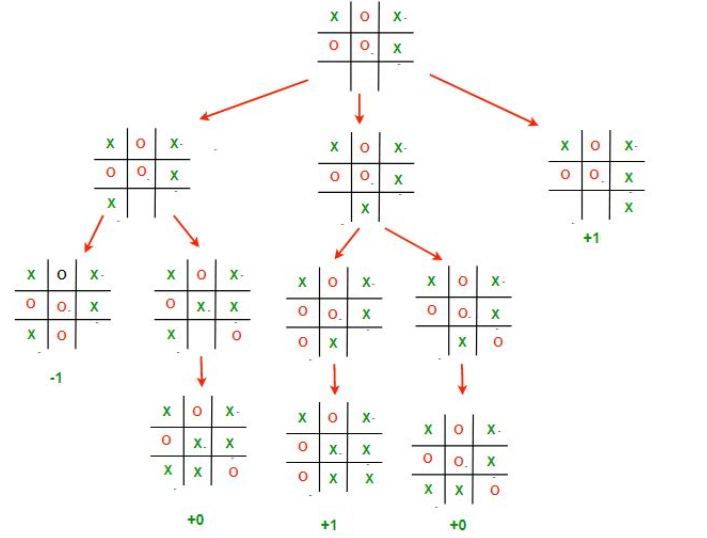

But the limit doesn’t approximate every real-world problem. What if I want to send only one copy of my message—one shot? One-shot information theory concerns how efficiently we can process finite amounts of information. Nilanjana and colleagues had defined entropies beyond Shannon’s and von Neumann’s, as well as proving properties of those entropies. The field’s cofounders also showed that these entropies quantify the optimal rates at which we can process finite amounts of information.

My mentors and I were applying one-shot information theory to quantum thermodynamics. I’d read papers of Nilanjana’s and spoken with her virtually (we probably used Skype back then). When I learned that she’d visit Waterloo in June, I was a kitten looking forward to a saucer of cream.

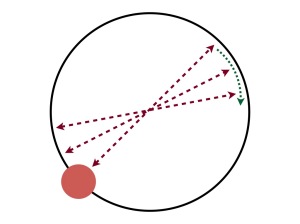

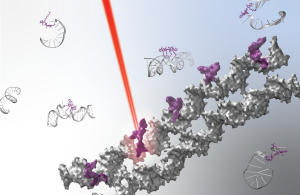

Nilanjana didn’t disappoint. First, she presented a seminar at Perimeter. I recall her discussing a resource theory (a simple information-theoretic model) for entanglement manipulation. One often models entanglement manipulators as experimentalists who can perform local operations and classical communications: each experimentalist can poke and prod the quantum system in their lab, as well as link their labs via telephone. We abbreviate the set of local operations and classical communications as LOCC. Nilanjana broadened my view to the superset SEP, the operations that map every separable (unentangled) state to a separable state.

Then, because she eats seminars for breakfast, Nilanjana presented an even more distinguished talk the same day: a colloquium. It took place at the University of Waterloo’s Institute for Quantum Computing (IQC), a nearly half-hour walk from Perimeter. Would I be willing to escort Nilanjana between the two institutes? I most certainly would.

Nilanjana and I arrived at the IQC auditorium before anyone else except the colloquium’s host, Debbie Leung. Debbie is a University of Waterloo professor and another of the most rigorous quantum information theorists I know. I sat a little behind the two of them and marveled. Here were two of the scions of the science I was joining. Pinch me.

My relationship with Nilanjana deepened over the years. The first year of my PhD, she hosted a seminar by me at the University of Cambridge (although I didn’t present a colloquium later that day). Afterward, I wrote a Quantum Frontiers post about her research with PhD student Felix Leditzky. The two of them introduced me to second-order asymptotics. Second-order asymptotics dictate the rate at which one-shot entropies approach standard entropies as (the number of copies of a message I’m compressing, say) grows large.

The following year, Nilanjana and colleagues hosted me at “Beyond i.i.d. in Information Theory,” an annual conference dedicated to one-shot information theory. We convened in the mountains of Banff, Canada, about which I wrote another blog post. Come to think of it, Nilanjana lies behind many of my blog posts, as she lies behind many of my papers.

But I haven’t explained about the little black dress. Nilanjana wore one when presenting at Perimeter and the IQC. That year, I concluded that pants and shorts caused me so much discomfort, I’d wear only skirts and dresses. So I stuck out in physics gatherings like a theorem in a newspaper. My mother had schooled me in the historical and socioeconomic significance of the little black dress. Coco Chanel invented the slim, simple, elegant dress style during the 1920s. It helped free women from stifling, time-consuming petticoats and corsets: a few decades beforehand, dressing could last much of the morning—and then one would change clothes for the afternoon and then for the evening. The little black dress offered women freedom of movement, improved health, and control over their schedules. Better, the little black dress could suit most activities, from office work to dinner with friends.

Yet I didn’t recall ever having seen anyone present physics in a little black dress.

I almost never use this verb, but Nilanjana rocked that little black dress. She imbued it with all the professionalism and competence ever associated with it. Also, Nilanjana had long, dark hair, like mine (although I’ve never achieved her hair’s length); and she wore it loose, as I liked to. I recall admiring the hair hanging down her back after she received a question during the IQC colloquium. She’d whirled around to write the answer on the board, in the rapid-fire manner characteristic of her intellect. If one of the most incisive scientists I knew could wear dresses and long hair, then so could I.

Felix is now an assistant professor at the University of Illinois in Urbana-Champaign. I recently spoke with him and Mark Wilde, another one-shot information theorist and a guest blogger on Quantum Frontiers. The conversation led me to reminisce about the day I met Nilanjana. I haven’t visited Cambridge in years, and my research has expanded from one-shot thermodynamics into many-body physics. But one never forgets the classics.