On December 6, I gave a keynote address at the Q2B 2023 Conference in Silicon Valley. Here is a transcript of my remarks.

Toward quantum value

The theme of this year’s Q2B meeting is “The Roadmap to Quantum Value.” I interpret “quantum value” as meaning applications of quantum computing that have practical utility for end-users in business. So I’ll begin by reiterating a point I have made repeatedly in previous appearances at Q2B. As best we currently understand, the path to economic impact is the road through fault-tolerant quantum computing. And that poses daunting challenges for our field and for the quantum industry.

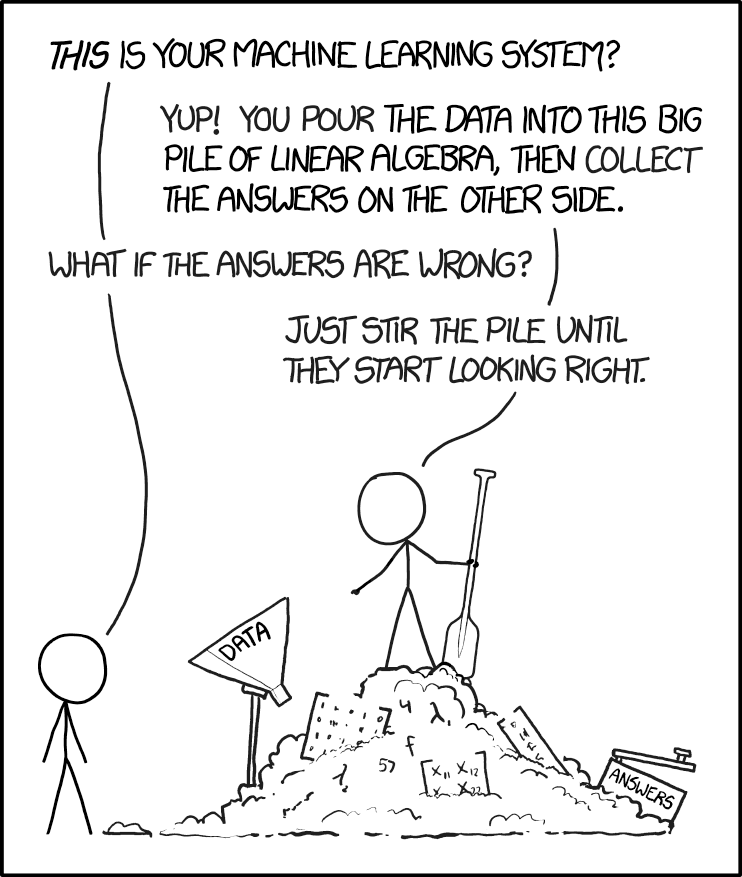

We are in the NISQ era. NISQ (rhymes with “risk’”) is an acronym meaning “Noisy Intermediate-Scale Quantum.” Here “intermediate-scale” conveys that current quantum computing platforms with of order 100 qubits are difficult to simulate by brute force using the most powerful currently existing supercomputers. “Noisy” reminds us that today’s quantum processors are not error-corrected, and noise is a serious limitation on their computational power. NISQ technology already has noteworthy scientific value. But as of now there is no proposed application of NISQ computing with commercial value for which quantum advantage has been demonstrated when compared to the best classical hardware running the best algorithms for solving the same problems. Furthermore, currently there are no persuasive theoretical arguments indicating that commercially viable applications will be found that do not use quantum error-correcting codes and fault-tolerant quantum computing.

A useful survey of quantum computing applications, over 300 pages long, recently appeared, providing rough estimates of end-to-end run times for various quantum algorithms. This is hardly the last word on the subject — new applications are continually proposed, and better implementations of existing algorithms continually arise. But it is a valuable snapshot of what we understand today, and it is sobering.

There can be quantum advantage in some applications of quantum computing to optimization, finance, and machine learning. But in this application area, the speedups are typically at best quadratic, meaning the quantum run time scales as the square root of the classical run time. So the advantage kicks in only for very large problem instances and deep circuits, which we won’t be able to execute without error correction.

Larger polynomial advantage and perhaps superpolynomial advantage is possible in applications to chemistry and materials science, but these may require at least hundreds of very well-protected logical qubits, and hundreds of millions of very high-fidelity logical gates, if not more. Quantum fault tolerance will be needed to run these applications, and fault tolerance has a hefty cost in both the number of physical qubits and the number of physical gates required. We should also bear in mind that the speed of logical gates is relevant, since the run time as measured by the wall clock will be an important determinant of the value of quantum algorithms.

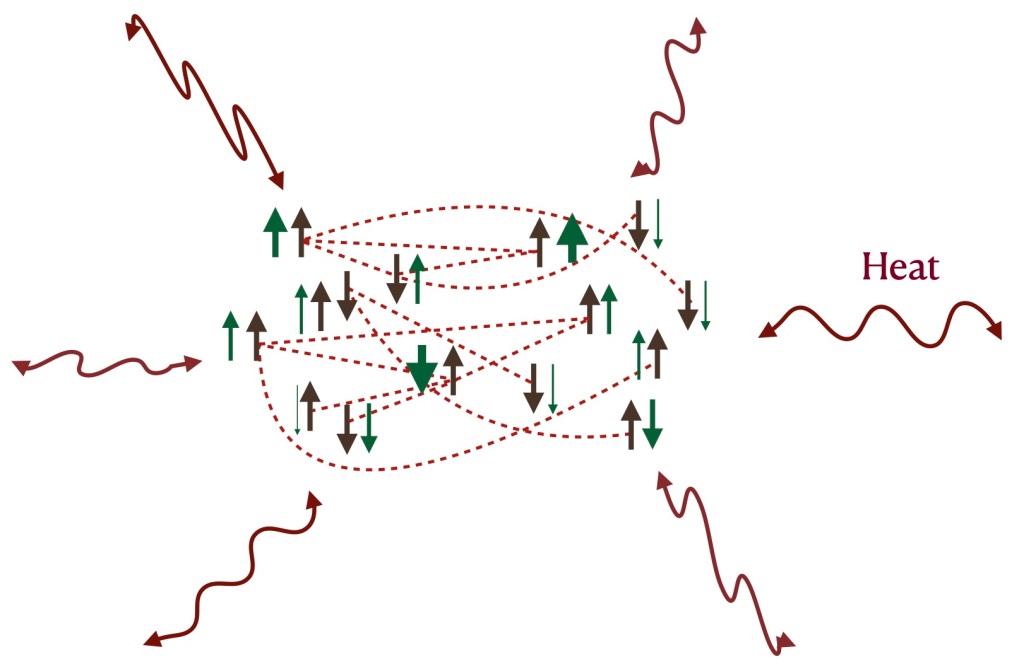

Overcoming noise in quantum devices

Already in today’s quantum processors steps are taken to address limitations imposed by the noise — we use error mitigation methods like zero noise extrapolation or probabilistic error cancellation. These methods work effectively at extending the size of the circuits we can execute with useful fidelity. But the asymptotic cost scales exponentially with the size of the circuit, so error mitigation alone may not suffice to reach quantum value. Quantum error correction, on the other hand, scales much more favorably, like a power of a logarithm of the circuit size. But quantum error correction is not practical yet. To make use of it, we’ll need better two-qubit gate fidelities, many more physical qubits, robust systems to control those qubits, as well as the ability to perform fast and reliable mid-circuit measurements and qubit resets; all these are technically demanding goals.

To get a feel for the overhead cost of fault-tolerant quantum computing, consider the surface code — it’s presumed to be the best near-term prospect for achieving quantum error correction, because it has a high accuracy threshold and requires only geometrically local processing in two dimensions. Once the physical two-qubit error rate is below the threshold value of about 1%, the probability of a logical error per error correction cycle declines exponentially as we increase the code distance d:

Plogical = (0.1)(Pphysical/Pthreshold)(d+1)/2

where the number of physical qubits in the code block (which encodes a single protected qubit) is the distance squared.

Suppose we wish to execute a circuit with 1000 qubits and 100 million time steps. Then we want the probability of a logical error per cycle to be 10-11. Assuming the physical error rate is 10-3, better than what is currently achieved in multi-qubit devices, from this formula we infer that we need a code distance of 19, and hence 361 physical qubits to encode each logical qubit, and a comparable number of ancilla qubits for syndrome measurement — hence over 700 physical qubits per logical qubit, or a total of nearly a million physical qubits. If the physical error rate improves to 10-4 someday, that cost is reduced, but we’ll still need hundreds of thousands of physical qubits if we rely on the surface code to protect this circuit.

Progress toward quantum error correction

The study of error correction is gathering momentum, and I’d like to highlight some recent experimental and theoretical progress. Specifically, I’ll remark on three promising directions, all with the potential to hasten the arrival of the fault-tolerant era: erasure conversion, biased noise, and more efficient quantum codes.

Erasure conversion

Error correction is more effective if we know when and where the errors occurred. To appreciate the idea, consider the case of a classical repetition code that protects against bit flips. If we don’t know which bits have errors we can decode successfully by majority voting, assuming that fewer than half the bits have errors. But if errors are heralded then we can decode successfully by just looking at any one of the undamaged bits. In quantum codes the details are more complicated but the same principle applies — we can recover more effectively if so-called erasure errors dominate; that is, if we know which qubits are damaged and in which time steps. “Erasure conversion” means fashioning a processor such that the dominant errors are erasure errors.

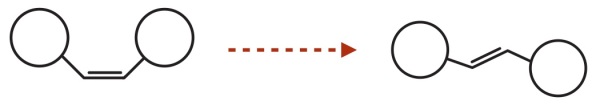

We can make use of this idea if the dominant errors exit the computational space of the qubit, so that an error can be detected without disturbing the coherence of undamaged qubits. One realization is with Alkaline earth Rydberg atoms in optical tweezers, where 0 is encoded as a low energy state, and 1 is a highly excited Rydberg state. The dominant error is the spontaneous decay of the 1 to a lower energy state. But if the atomic level structure and the encoding allow, 1 usually decays not to a 0, but rather to another state g. We can check whether the g state is occupied, to detect whether or not the error occurred, without disturbing a coherent superposition of 0 and 1.

Erasure conversion can also be arranged in superconducting devices, by using a so-called dual-rail encoding of the qubit in a pair of transmons or a pair of microwave resonators. With two resonators, for example, we can encode a qubit by placing a single photon in one resonator or the other. The dominant error is loss of the photon, causing either the 01 state or the 10 state to decay to 00. One can check whether the state is 00, detecting whether the error occurred, without disturbing a coherent superposition of 01 and 10.

Erasure detection has been successfully demonstrated in recent months, for both atomic (here and here) and superconducting (here and here) qubit encodings.

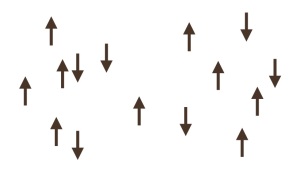

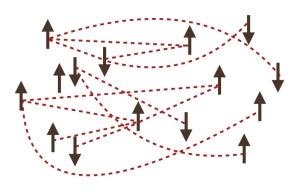

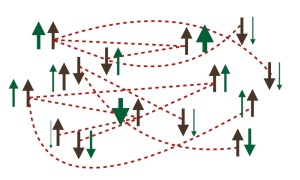

Biased noise

Another setting in which the effectiveness of quantum error correction can be enhanced is when the noise is highly biased. Quantum error correction is more difficult than classical error correction partly because more types of errors can occur — a qubit can flip in the standard basis, or it can flip in the complementary basis, what we call a phase error. In suitably designed quantum hardware the bit flips are highly suppressed, so we can concentrate the error-correcting power of the code on protecting against phase errors. For this scheme to work, it is important that phase errors occurring during the execution of a quantum gate do not propagate to become bit-flip errors. And it was realized just a few years ago that such bias-preserving gates are possible for qubits encoded in continuous variable systems like microwave resonators.

Specifically, we may consider a cat code, in which the encoded 0 and encoded 1 are coherent states, well separated in phase space. Then bit flips are exponentially suppressed as the mean photon number in the resonator increases. The main source of error, then, is photon loss from the resonator, which induces a phase error for the cat qubit, with an error rate that increases only linearly with photon number. We can then strike a balance, choosing a photon number in the resonator large enough to provide physical protection against bit flips, and then use a classical code like the repetition code to build a logical qubit well protected against phase flips as well.

Work on such repetition cat codes is ongoing (see here, here, and here), and we can expect to hear about progress in that direction in the coming months.

More efficient codes

Another exciting development has been the recent discovery of quantum codes that are far more efficient than the surface code. These include constant-rate codes, in which the number of protected qubits scales linearly with the number of physical qubits in the code block, in contrast to the surface code, which protects just a single logical qubit per block. Furthermore, such codes can have constant relative distance, meaning that the distance of the code, a rough measure of how many errors can be corrected, scales linearly with the block size rather than the square root scaling attained by the surface code.

These new high-rate codes can have a relatively high accuracy threshold, can be efficiently decoded, and schemes for executing fault-tolerant logical gates are currently under development.

A drawback of the high-rate codes is that, to extract error syndromes, geometrically local processing in two dimensions is not sufficient — long-range operations are needed. Nonlocality can be achieved through movement of qubits in neutral atom tweezer arrays or ion traps, or one can use the native long-range coupling in an ion trap processor. Long-range coupling is more challenging to achieve in superconducting processors, but should be possible.

An example with potential near-term relevance is a recently discovered code with distance 12 and 144 physical qubits. In contrast to the surface code with similar distance and length which encodes just a single logical qubit, this code protects 12 logical qubits, a significant improvement in encoding efficiency.

The quest for practical quantum error corrections offers numerous examples like these of co-design. Quantum error correction schemes are adapted to the features of the hardware, and ideas about quantum error correction guide the realization of new hardware capabilities. This fruitful interplay will surely continue.

An exciting time for Rydberg atom arrays

In this year’s hardware news, now is a particularly exciting time for platforms based on Rydberg atoms trapped in optical tweezer arrays. We can anticipate that Rydberg platforms will lead the progress in quantum error correction for at least the next few years, if two-qubit gate fidelities continue to improve. Thousands of qubits can be controlled, and geometrically nonlocal operations can be achieved by reconfiguring the atomic positions. Further improvement in error correction performance might be possible by means of erasure conversion. Significant progress in error correction using Rydberg platforms is reported in a paper published today.

But there are caveats. So far, repeatable error syndrome measurement has not been demonstrated. For that purpose, continuous loading of fresh atoms needs to be developed. And both the readout and atomic movement are relatively slow, which limits the clock speed.

Movability of atomic qubits will be highly enabling in the short run. But in the longer run, movement imposes serious limitations on clock speed unless much faster movement can be achieved. As things currently stand, one can’t rapidly accelerate an atom without shaking it loose from an optical tweezer, or rapidly accelerate an ion without heating its motional state substantially. To attain practical quantum computing using Rydberg arrays, or ion traps, we’ll eventually need to make the clock speed much faster.

Cosmic rays!

To be fair, other platforms face serious threats as well. One is the vulnerability of superconducting circuits to ionizing radiation. Cosmic ray muons for example will occasionally deposit a large amount of energy in a superconducting circuit, creating many phonons which in turn break Cooper pairs and induce qubit errors in a large region of the chip, potentially overwhelming the error-correcting power of the quantum code. What can we do? We might go deep underground to reduce the muon flux, but that’s expensive and inconvenient. We could add an additional layer of coding to protect against an event that wipes out an entire surface code block; that would increase the overhead cost of error correction. Or maybe modifications to the hardware can strengthen robustness against ionizing radiation, but it is not clear how to do that.

Outlook

Our field and the quantum industry continue to face a pressing question: How will we scale up to quantum computing systems that can solve hard problems? The honest answer is: We don’t know yet. All proposed hardware platforms need to overcome serious challenges. Whatever technologies may seem to be in the lead over, say, the next 10 years might not be the best long-term solution. For that reason, it remains essential at this stage to develop a broad array of hardware platforms in parallel.

Today’s NISQ technology is already scientifically useful, and that scientific value will continue to rise as processors advance. The path to business value is longer, and progress will be gradual. Above all, we have good reason to believe that to attain quantum value, to realize the grand aspirations that we all share for quantum computing, we must follow the road to fault tolerance. That awareness should inform our thinking, our strategy, and our investments now and in the years ahead.